If you’re starting out with Power BI for the first time, or you’ve found Power BI has crept its way into your business and you’re wondering how best to implement a robust reporting solution, you are not alone. In fact, the service has been steadily growing year-on-year in terms of number of users and features, to the point it has moved beyond being a market disruptor. Every year it regularly sits in the top-right corner of the Gartner Magic Quadrant for Analytics and BI Platforms(1).

Along with this large uptake in users, there is also no shortage of support in the wider Power BI Community – forums, blog posts (Breaking the fourth wall, I’m being very self-aware, saying that) and videos. This means help or an answer to a question is never too far away, so it’s all cocktails and canapés? Not so fast…

The ease with which people can get their hands on Power BI and start building reports, can be a bit of a hindrance when it comes to producing a coherent plan for introducing it into your business. It isn’t a case of just introducing the application to your userbase and letting them get on with it – BI/Analytics never has been about that.

Dataset-only Approach

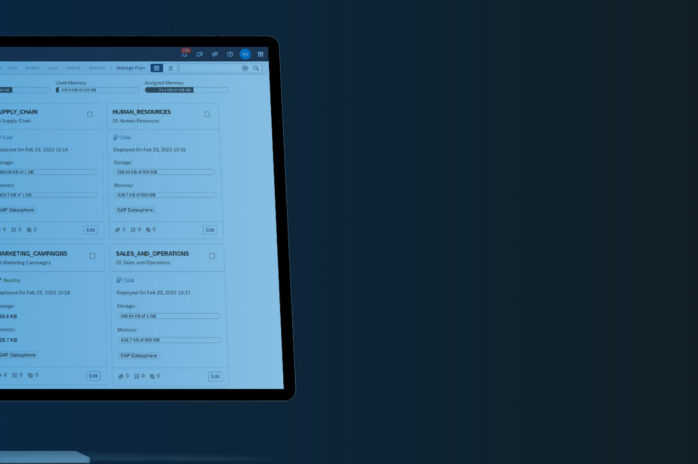

The first interactions a lot of people have with Power BI is in the desktop tool. Here they will add their data, build a dataset and drop in some visuals. After that, they may often think sharing reports and building more content is limited to just publishing those datasets, installing a gateway to connect to their on-premise data and then set a schedule to refresh it. The high-level data flow architecture to explain this process is shown below.

In this example, all the different datasets are operating independently, refreshing data from the sources via the gateway and carrying out their own transformations. This is the Power BI Service at its simplest. It’s also quite problematic for a whole host of reasons, such as the same transformations are taking place over and over again, for different datasets. This means you are repeatedly asking the gateway to repeat the same transformational steps; maybe you’re even making some slightly different transformations across those datasets. In all, you have a process that is inefficient, you’ve potentially lost the single version of the truth, and it’s all gone a little chaotic with datasets scattered around different workspaces. Worse still, you may also have people with access to source databases that shouldn’t really have that access? Or has that been resolved by limiting who can build datasets in the first place? That can mean you have a development bottleneck, and users still copying data out to Excel.

The best way to resolve this issue, or prevent it from happening in the first place, is to centralise your data transformations and allow users to self-serve their datasets from that prepared data source. Centralising data transformations means there is minimal duplication of effort. Self-Service dataset production is possible because the source tables are easier to join together, and data has been changed to meet the reporting needs of your business.

There are 2 ways we can overcome this challenge – the first method (and by far the best) is with a good old data warehouse, the Old Faithful of the BI world. The second method is a little more recent – Power BI Datamarts, which serves as a halfway house between the chaotic dataset-only approach and the reliable data warehouse approach.

Data Warehouse Approach

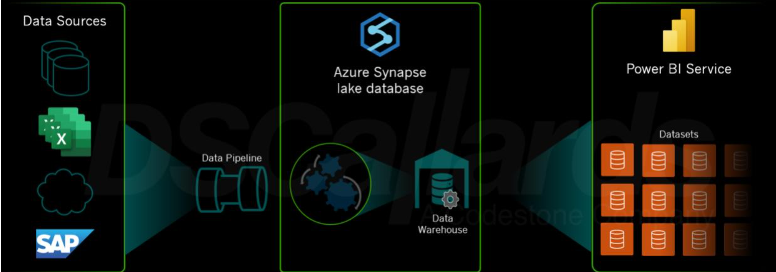

This is by far the best approach to take for increasing the engagement of Power BI within your business. You have a designed and developed technical solution, that delivers the answers to the business questions. It’s an entirely scalable solution that can easily grow as your reporting needs grow, whilst still giving you the ability to control it all to a very granular level. The architecture at a very high level looks like the below.

In this example, the data transformation takes place in Azure Synapse over a lake database, but it could just as easily be an on-premise or other cloud solution using any one of the numerous tools found in the data transformation market.

The design process for a data warehouse is one that is driven by determining the business KPIs or operational reporting needs, finding where the data is that satisfies those needs and breaking it out into the corresponding Fact and Dimension tables that describes it all. The development process is one that builds the required transformations, pulling data through a series of pipelines, to make these fact and dimension tables – contrary to what a lot of people think this is a very agile process that starts returning on the investment very quickly; as the dimensions and facts are produced, they can be rapidly tested and start being used whilst the rest of the data landscape is still being built.

From this point in the data flow, users can build their own datasets that are much simpler and less intensive on the data transformations. A data warehouse is, therefore, the most business-friendly and future-proof solution for the vast majority of business reporting needs.

Power BI Datamart Approach

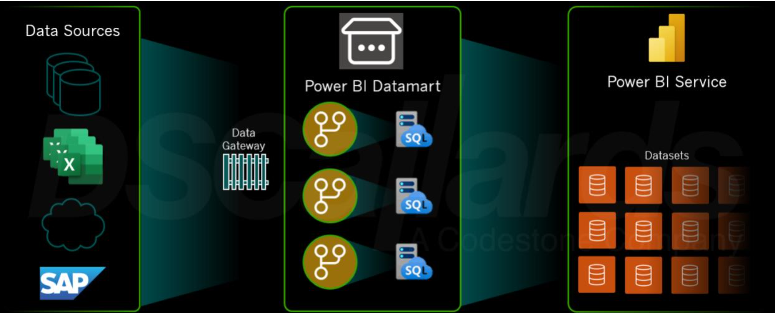

Think of this method as ‘diet data warehouse’. Here’s the high level architecture to show you the flow of data.

The start and the end point of this approach should look (if it’s been designed correctly) as close to the data warehouse approach as possible. So more people building datasets from the same sort of simplified model – it’s just the transformation part and the storing of that transformed data that is different.

At this point, we need to add a quick caveat – this method represents a simpler method of transforming data that is closer to the dataset-only approach, than it is the data warehouse approach. In fact, it’s the same Power Query tool transforming data in the datamart that you find in the Power BI desktop application, represented through the dataflows that are held within the datamart. The datamart sits within a Power BI Workspace, and the data is held in a data lake within Power BI. This means there are three important points:

1. The datasets contribute to the overall size limitations for a user account in the Power BI Service.

2. All the data transformations go through the gateway, which could present other processing power limitations.

3. Unlike a data warehouse, the datamart processing could be subjected to unexpected throttling when it is refreshed, leading to delays in transformed data availability.

Summary

We have seen 3 different approaches to handling data in Power BI.

- The dataset-only approach, which may be the one that springs to mind when you first start using Power BI, can be the most inefficient and restrictive approach when your analytical output grows.

- The datamart approach is useful for cases where you want to present the users with a simpler pre-transformed data source to increase the number of users producing datasets within your business.

- The data warehouse approach still remains the most powerful and business-friendly approach for transforming data, and giving users more analytical capabilities.

Get in touch with us to learn how we can help you!

References